SILCO: Show a Few Images, Localize the Common Object

Tao HU Pascal Mettes Jia-Hong Huang Cees G. M. Snoek

University of Amsterdam

in ICCV 2019

Paper |Code

Abstract

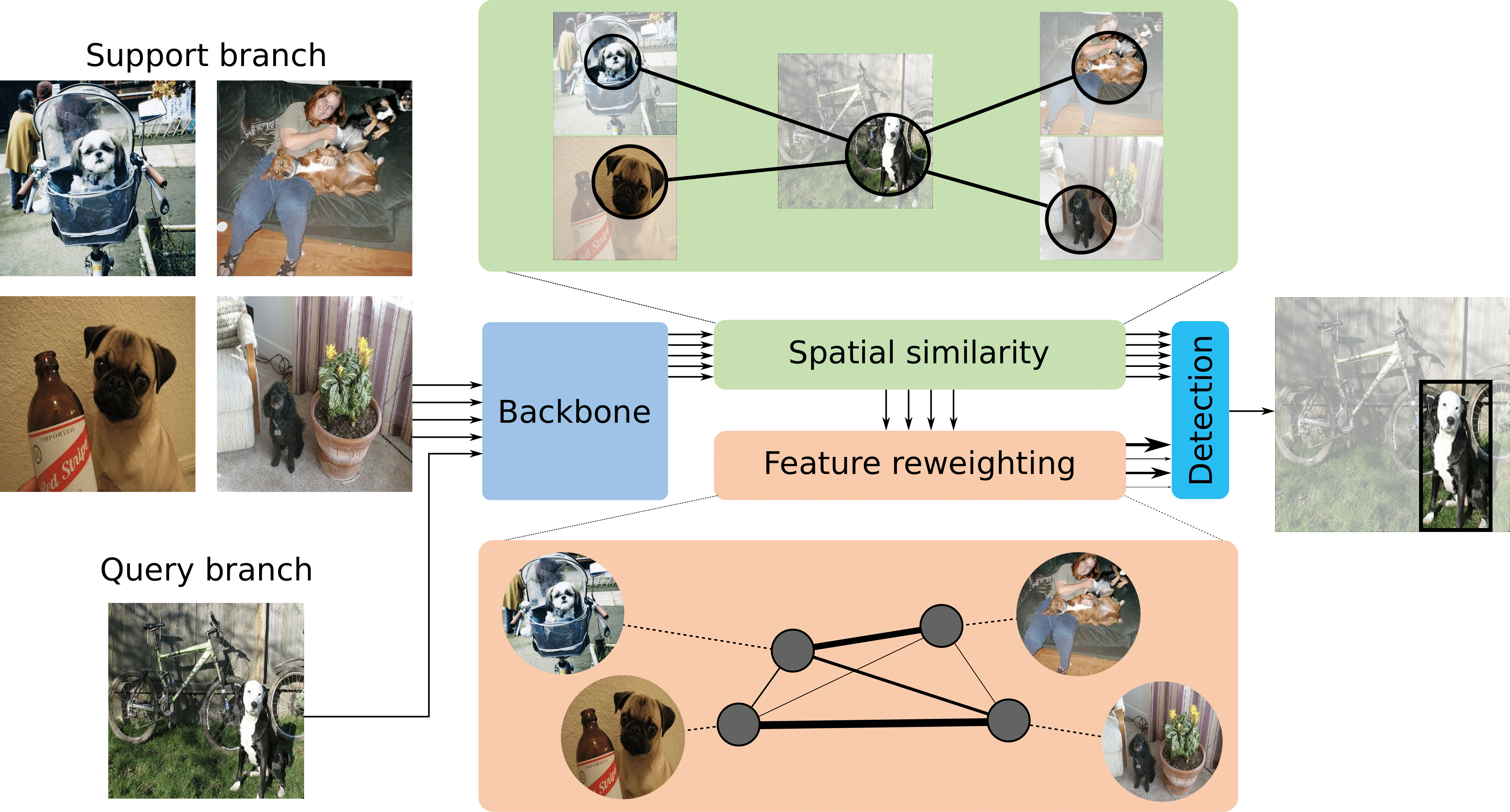

Few-shot learning is a nascent research topic, motivated by the fact that traditional deep learning requires tremendous amounts of data. In this work, we propose a new task along this research direction, we call few-shot common-localization. Given a few weakly-supervised support images, we aim to localize the common object in the query image without any box annotation. This task differs from standard few-shot settings, since we aim to address the localization problem, rather than the global classification problem. To tackle this new problem, we propose a network that aims to get the most out of the support and query images. To that end, we introduce a spatial similarity module that searches the spatial commonality among the given images. We furthermore introduce a feature reweighting module, which balances the influence of different support images through graph convolutional networks. To evaluate few-shot common-localization, we repurpose and reorganize the well-known Pascal VOC and MS-COCO datasets, as well as a video dataset from Imagenet VID. Experimental evaluation on the new settings for few-shot common-localization shows the importance of searching for spatial similarity and feature reweighting, outperforming baselines from related tasks.

Brief Description of the Problem and Method

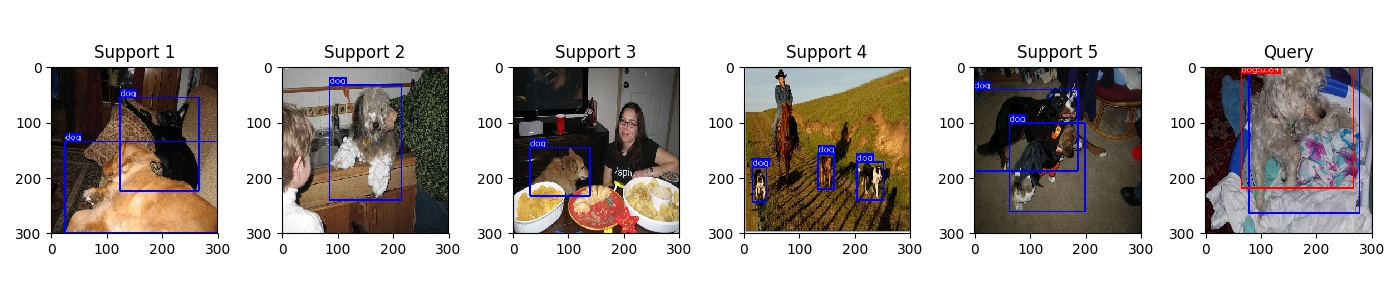

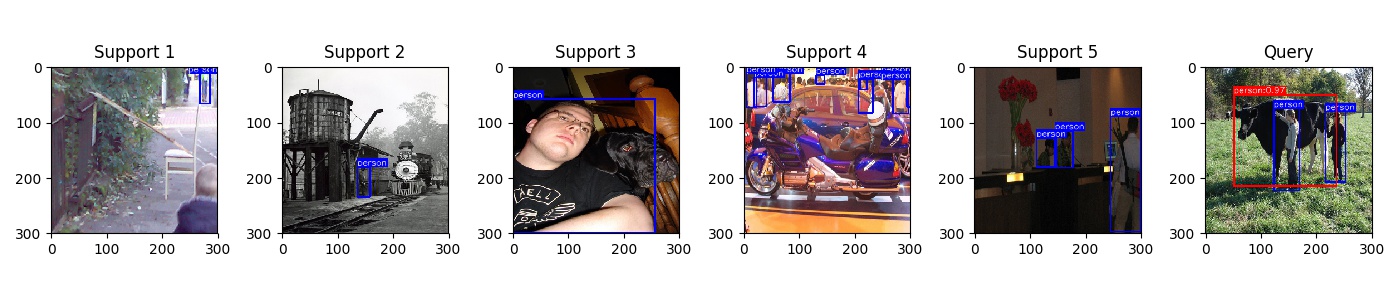

Few-shot common-localization. Starting from a few weakly-supervised support images and a query image, we are able to localize the common object in the query image without the need for a single box annotation. Notice here, we only there is common object between support images and query image, while we don't know where and what the common object is.

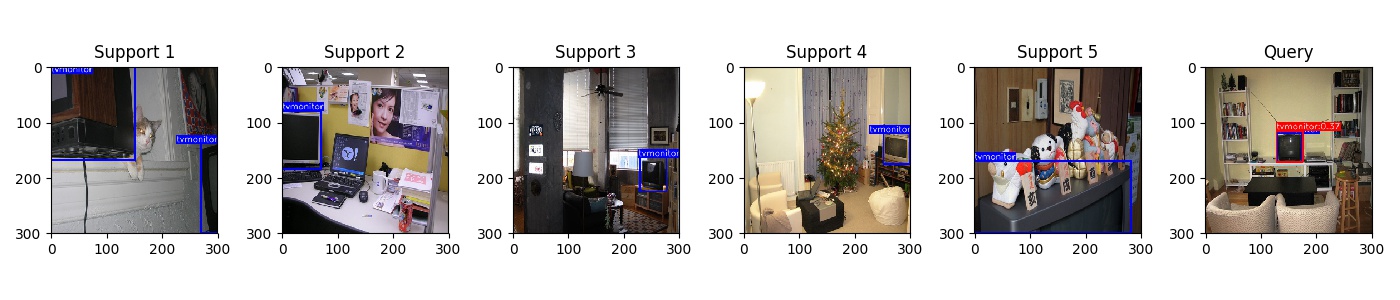

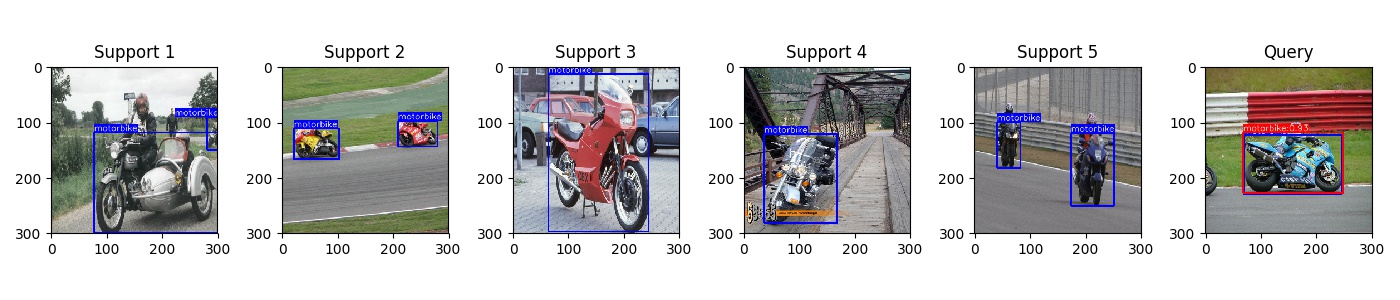

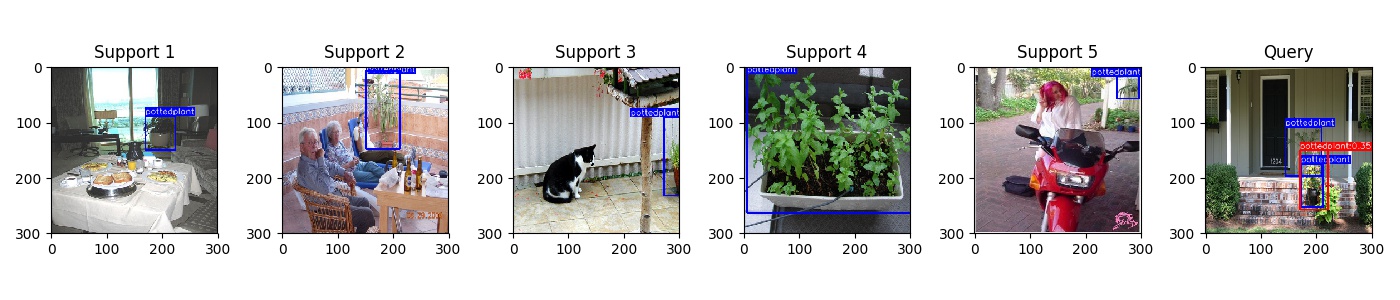

Qualitative Result

The bounding box and class label here are only used for illustration, we didn't utilize those informantion in our experiment.

Miscellaneous

More Pascal VOC Success and failure cases

More MS COCO Success and failure cases

Acknowledgement

This page style is borrowed from https://nvlabs.github.io/SPADE/